90% of our users are mobile and I am sure yours are too. They carry around your business in their pockets and we carry their hopes and dreams 🙂

Now, as many as 85% these users are using low-end mobile devices

This represents a new reality for me and my team working here at shaadi.com. I work on a product called sangam.com and every decision we plan to ship to production needs to be based on a set of constraints.

Things that work on the desktop will not work right on mobile

The hard truth about building SPAs with Javascript

Downloading and executing a large bundle of javascript in your web app will slow down both rendering and interactions and your page will be unresponsive for that time.

A recent Google study indicated that 53% of people will abandon a mobile site if it takes more than three seconds to load. Still, our site used to take a lot more than that (~20secs).

Of course, Page speed is not the only metric we should care about, the bigger culprit is Javascript execution time. The time is taken by the browser to execute the javascript that makes the page interactive.

This is what lighthouse score was before we started working on performance:

The Problem 🤔

JavaScript is still the most expensive resource we send to mobile phones, because it can delay interactivity in large ways. — The Cost Of JavaScript In 2018

Downloading more than 700 KB of gzipped javascript (almost 2.5 MB unzipped) and then parsing it, one can imagine the time it is gonna take to become interactive for a user, it is so much that many of our users were leaving the app before they see anything on the page.

It soon became evident to us that our product was suffering badly due to performance issues and something had to be done to solve this problem.

Here’s what we fixed

Firstly, like all javascript performance enthusiasts, we tried code splitting which has significantly decreased the amount of javascript we were sending to the end user.

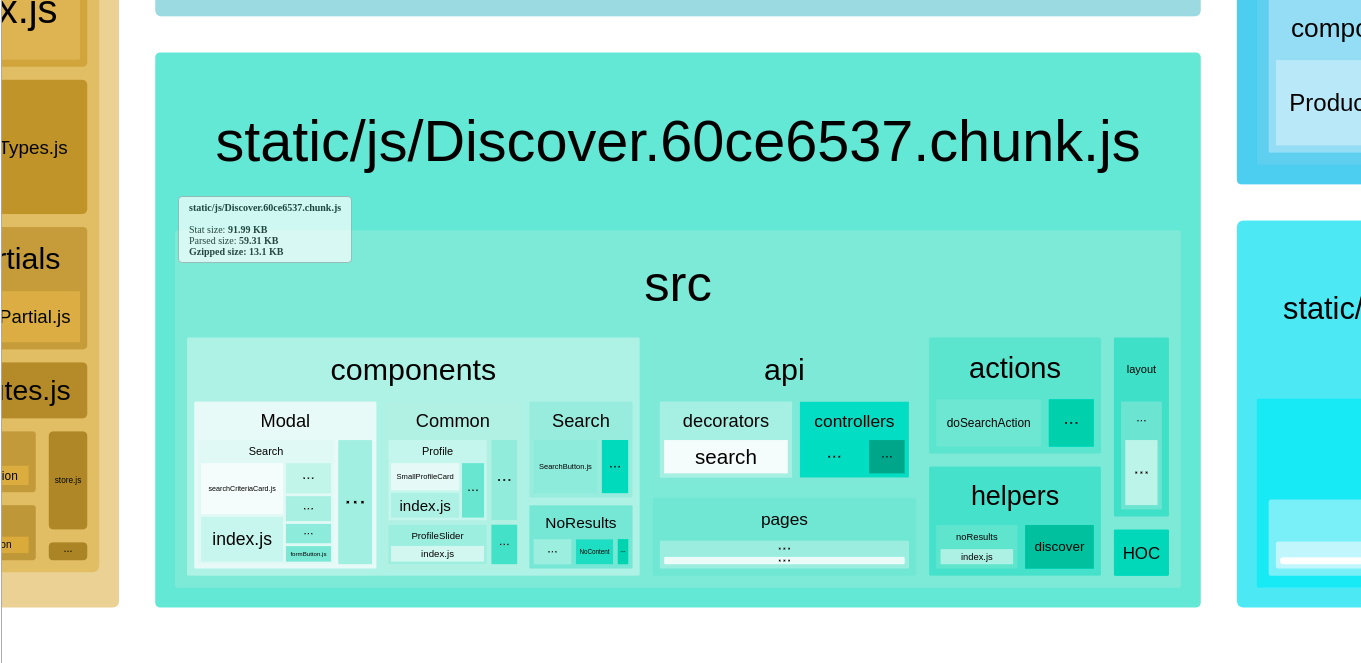

Initially, we started splitting our components based on routes using a very powerful react library react-loadable that created an individual chunk for each defined route but the main problem isn’t solved, our main bundle was still heavy. Most of the actions, reducers, decorators, and API controllers were still part of the main bundle which was only needed for specific routes/components.

We also realised we could split our actions, decorators and API controllers based on their routes/components. So we attacked this problem with a solution which was very similar to the one Dan Abramov suggested for code splitting in Redux in one of his GitHub gists but instead of splitting reducers, we split away actions, decorators and API controllers.

Keep a look out for a future post on code splitting redux

After all that hard work, our chunks started looking like this.

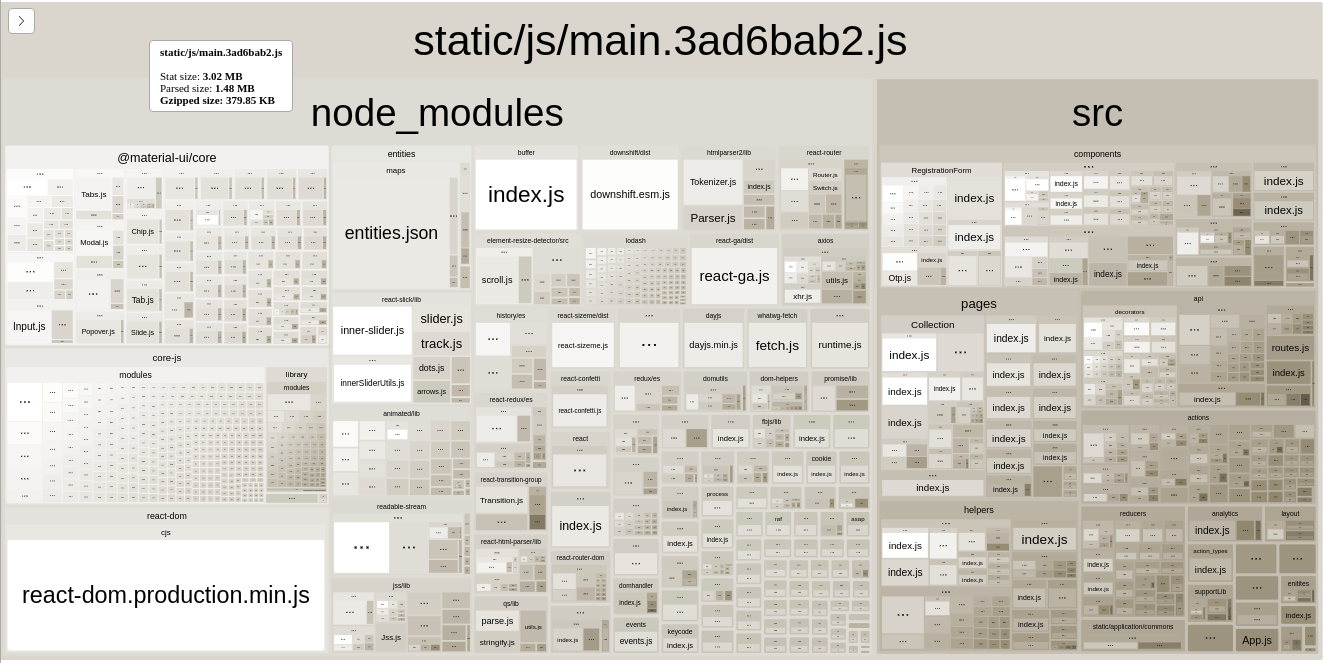

And finally, this is the result of webpack-bundle-analyzer.

main bundle map using webpack-bundle-analyzer after code-splitting

main bundle map using webpack-bundle-analyzer after code-splitting

It has a main bundle which is less than 200 KB and few other route-based chunks (20–30 KB each) which we’re loading based on routes.

[UPDATE] Create React App V2

We recently upgraded our create-react-app to the latest v2 version. Immediately, we saw a huge improvement in bundle sizes. So now our main bundle has reduced to ~130 KB and the initial javascript which we’re loading is now under 170 KB. As Addy Osmani’s great post The Cost of Javascript in 2018 suggests we’ve set the budget of our app should be less than 170–190 KB and we have to learn to live within them.

cra2

cra2

So in summary what we used are these approaches and tools

- Dynamic Import

- Code Splitting

- React-loadable

By splitting code we solved one of the major problems which was sending more javascript than needed to the end-user. This helped us in reducing the page load significantly and now mobile browsers have to download and execute less.

By splitting code we solved one of the major problems which was sending more javascript than needed to the end-user. This helped us in reducing the page load significantly and now mobile browsers have to download and execute less.

Prefetching for the win 🙌 But as we created route based chunks we ran into one more problem: whenever a user changed routes on the client side, a new javascript chunk needed to be fetched and for that time all they would see is an annoying loader.

To solve this problem, the chunks would need to be prefetched & pre-cached and that’s how they would be already available for other routes as soon as the user tries to change the route.

Finally, we thought of using service workers

We chose Workbox to implement different caching strategies such as stale-while-revalidate, cache first. Workbox provided us with a simple API to implement these strategies. Along with precaching critical bundles we also implemented runtime caching when a user visits non-critical pages.

Precaching assets without caching the index.html helped to get the latest version immediately once it is released. Precaching of javascript was done with asset-manifest.json provided by create-react-app on the build process. We set the cache header of this asset-manifiest.json to 0 so we could get the latest version of our javascript bundle every time. That is then used to precache.

Stale assets cache are automatically clean up on each release and each cache is versioned to avoid cache collision.

mobile-lighthouse

mobile-lighthouse

desktop-lighthouse

desktop-lighthouse

References to tools and strategies used

- Service Workers

- Web App Manifest

- Workbox

- BundlePhobia

- Dynamic Import

- Code Splitting

- React-loadable

- The Cost Of JavaScript In 2018 — Addy Osmani

- Can You Afford It?: Real-world Web Performance Budgets

- PRPL Pattern

- User-centric Performance Metrics

- Redux modules and code-splitting

- Dan Abramov’s Splitting Approach